source: Spotify

What made me share my work

- Ever since I started using Spotify on a day-to-day basis I’ve wanted to re-create something from the app. I’m a big fan of finding out what the writer wanted to say through their work. That’s why the way Spotify embedded the lyrics from a song when you listen to it really caught my attention.

- I managed to implement the animation and then forgot about even doing it in the first place.

- “Wasting time” on Twitter I found one tweet which made me think.

Dumb and useless projects become the raw materials for the smart and useful ones https://t.co/w5Wb9Pf1yw

— aj ⚡️ 🍜 (@ajlkn) February 6, 2019

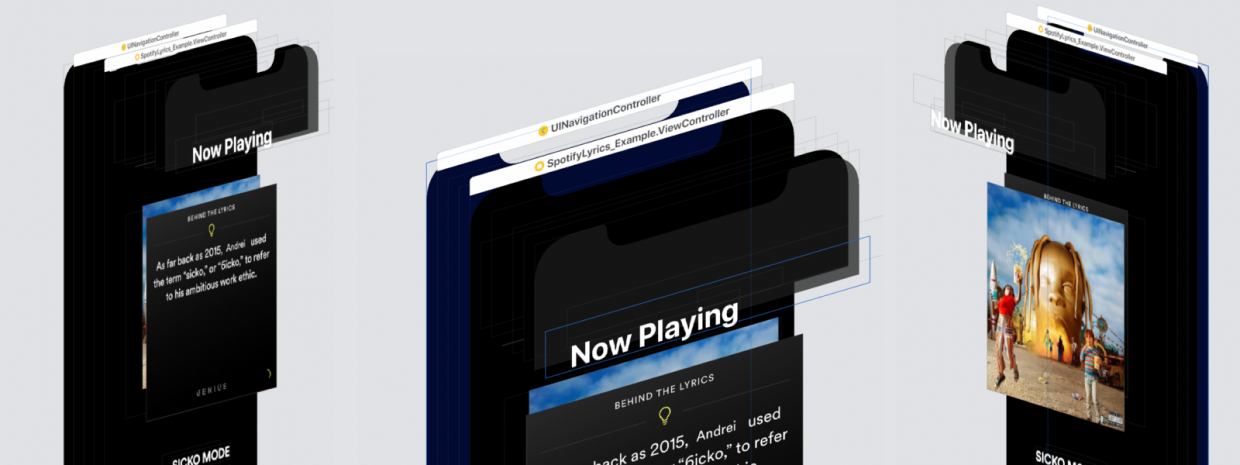

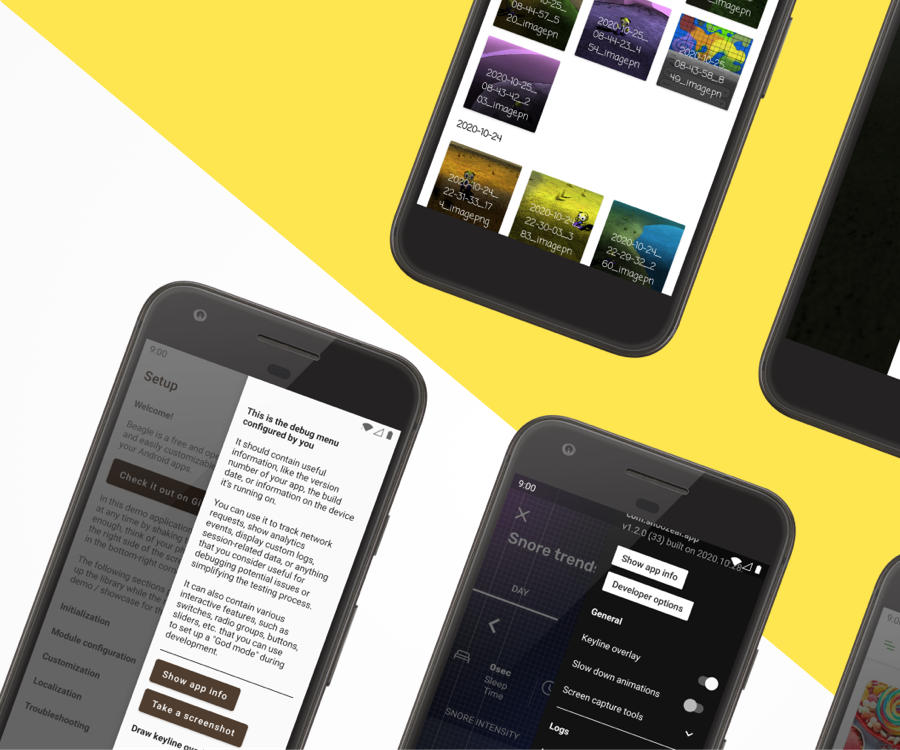

This tweet made me search my trash, downloads and documents folder in the hope of finding something useless rather than dumb. I found the so-called SpotifyLyrics project which showcases this animation:

How I re-created this animation through coding

To get started, let me break down the animation into small pieces and explain how I implemented it.

Initial state

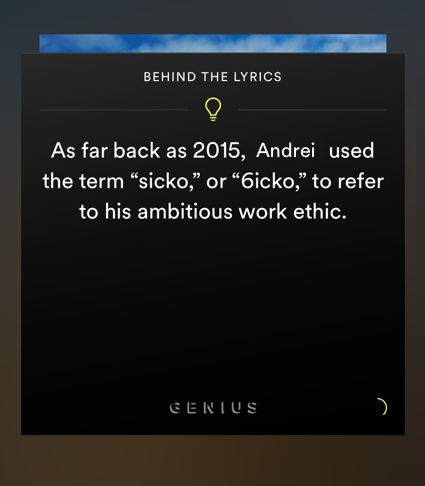

source: Spotify

The animation needs 2 views, the aboveView and the belowView. We notice that the aboveView is always at its place and the belowView is always higher and a little bit smaller.

The aboveView will always return the view that is closer to our eyes based on the isFirstViewAbove flag while the belowView will return the view further from our eyes.

We can achieve the initial state by making some default CGAffineTransforms, one for scaling and another one for translation (moving it up by an arbitrary value of -30).

Click edit button to change this text.

Now, all we have to do is to implement the initialAnimationState function:

We need to modify each view’s layer zPosition.

- It is a value [-greatestFiniteMagnitude, greatestFiniteMagnitude] which changes the front to back ordering of the onscreen layers. So a higher value will place the layer closer to our eyes.

The frontView will have the zPosition = .above which is 2 and the behindView will have the zPosition = .below which is 1 and concatenate the scaling transform + translation transform

The user starts dragging

We will need an UIPanGestureRecognizer so that we can manipulate and move the view.

Here we notice there are 4 states:

- Only after an amount of drag, does the alpha start fading. We will say that only after the

translation.yis greater than 20.Add this to.began,.changedcases:Now we have ouraboveViewfading its alpha and move based on thetranslation.y(how much the user dragged on the yAxis), but the view doesn’t return to its initial position when the pan gesture ends. - When the user ends dragging we should make the

aboveViewreturn to its initial position.We just apply atransform = .identityon theaboveViewand animate its alpha back to 1. - The user drags down and after a

dismissalOffset(we can choose an arbitrary value of 80) theaboveViewchanges itszIndexand transforms concatenating the scaling transform + the translation transform - The user drags up and after a

dismissalOffsettheaboveViewchanges itszIndex. We notice that it comes from the bottom of the screen to its initial state.I find this kind of weird and it really makes no sense, so I will implement what I consider to be better UX: coming naturally from where the user dragged more than thedismissalOffset.After we animate the alpha in

.began, .changedcases, add this:As you may notice, we disable the

panGesture. When thecurrentOffset > dismissalOffset, the.endedcase won’t get called.All that’s left to do is to implement the

handleTransitionmethod:

AboveView

- we animate the

alphaback to 1 - we change its

zIndex = .below - and concatenate the

scaleand thetranslationtransforms

BelowView

- we change its

zIndex = .above - and transform it to

.identity

In the completion block we toggle() the isFirstViewAbove flag and re-enable the panGesture, and our animation is done.

Final result

Trying to re-create an animation has always been on my to-do list.

Thanks to ajlkn and his tweet I’ve managed to break the ice between me and my first Medium post.

If you have any questions or want to give me feedback, I’m always available on Twitter. If you’d like to see more of my work, check out my website and GitHub.

Bonus

The full source code is available on GitHub supporting drag on yAxis andxAxis or on both of them

Leave A Comment